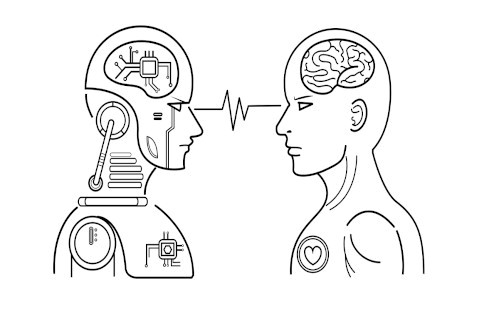

I was still in research and development when a customer came to us with this special pharmaceutical capsule. Very special. I will not go into detail why the capsule was so special and difficult, but almost 10 years later this is still the toughest challenge in visual inspection we have ever faced.

The magic took a year and half and over

30.000 hours of development before we

were ready for the first fight – The

Experiment: Human vs Machine.

At first, I thought we were being asked if machines can inspect the invisible. Our brains overclocked at the sight of the capsule and we were afraid as if the capsule was a monster from a fantasy book. This monster had defeated some solutions before us, that was obvious. Simply sharpening the swords for this monster was not enough, we needed magic to fight it. And magic we tried. The magic took year and half and over 30,000 hours of development before we were ready for the first fight – The Experiment: Human vs Machine.

THE EXPERIMENT

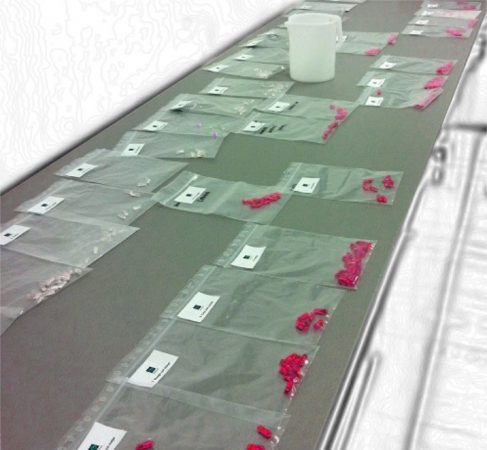

Together with the customer we took 2 samples of each defect type listed in their SOP, a total of 42 defective capsules. Then we marked the 42 samples with UV marker. The UV marking is invisible to humans and to the machines with LED light (the one we used), so the UV markings helped us to evaluate the performance of the machine and people. We mixed the marked capsules to a batch of about 200,000 capsules and inspected capsules on the machine with magic, i.e. with the newly developed software for this task. During the inspection we prayed that the machine would be able to defeat the monster, but did not interfered with the process in any way. After the machine inspected and sorted the capsules, we sealed the container with the defective capsules. The container was returned, directly to the customer’s team for manual inspection. They weren’t told about the experiment. They were instructed to find the defects in the container according to their SOP.

This is me getting ready for The Experiment.

Manual inspection was not told about

the experiment, they were just

instructed to find defects in the

container according to their SOP.

THE PANIC

About a month later, we received the first feedback from customer’s project team: “Manual inspection found some defects in the container we sent them. We checked their findings with UV light. There were only 6 UV-marked defects”. We panicked: “Wait, what? They only found six?”. One explanation was, that UV-marked defects could not be found by manual inspection, because our machine did a lousy job and sorted marked defects into containers for good capsules. The other explanation was that the manual inspection was, blatantly said, blind. We were worried because we know a person can be very precise and we expected them to find more. Did you know that humans can see black dots down to 60 µm? To better understand how small this is, a person can almost distinguish a skin cell, which is the size of 30 µm! The problem is, when a person wants to inspect something very closely, they need to concentrate, which takes time, typically a few seconds for each product. And we were worried because the manual inspection team had no time pressure.

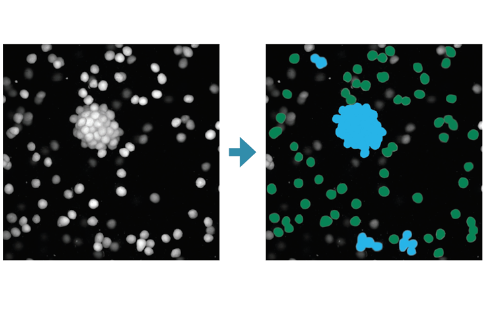

Different types of defects manually classified and ready for testing validation of automated inspection machine.

We panicked: “Wait, what? Manual

inspection only found six UV marked

defects?”

INTO THE DARKNESS

Obviously, the next step was to check where the rest of the UV-marked defects were located. We started to look for marked defects in the contents of the container with capsules, that were rated as good by the manual inspection but were sorted as defective by the machine. Since we needed to find small UV markings, we had to do this in a completely dark room with UV light. I was there. The monster seemed so much bigger in the dark. All I remember is that I kept saying, they must be here, they must be here! And they were. We found 35 more UV-marked defects! This means machine found 41 marked defects out of 42 introduced into the batch. The machine only missed one defect, and this was classified as a minor defect. The magic worked. The manual inspection, on the other hand, found only 6 of 41 defects in the container and missed some of the critical defects! The panic was suddenly on the customer’s side: “Are we doing anything at all with the manual inspection?”

Manual inspection took place on “semi-automatic” machine with a conveyor belt and extra light.Inspection and sorting were done manually.

The panic was suddenly on the

customer’s side: “Are we doing

anything at all with the manual

inspection?”

THE TRUST

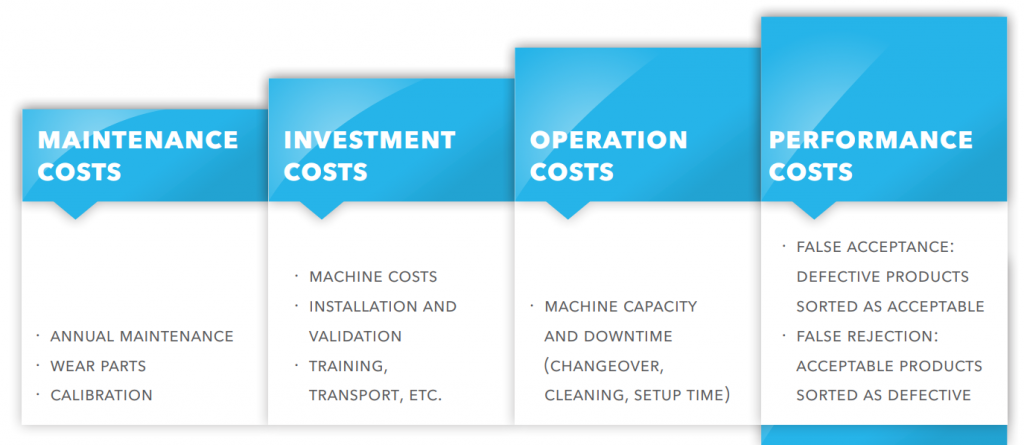

Showing what manual inspection can or cannot do with this special capsule, was not the goal of the experiment. The aim was to give the customer confidence in automatic inspection machines in which we succeeded. However, we should not generalize the results of this experiment to all products and machines. Not all inspection machines work with the same reliability and we did not even discuss false rejection (good products sorted as defective), which leads to direct business losses.

Make investment decisions based on

actual figures, not on data found in sales

brochures.

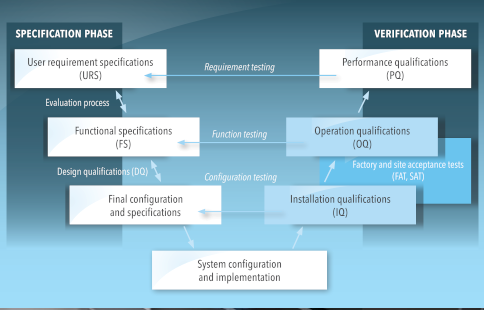

That’s why a little testing will help you understand complex machines and gain confidence in them. In this white paper, Sensum has described a little more about the pitfalls of investing in machines. The paper focuses on inspection machines, but it is not difficult to draw parallels with any other investment. My advice is to make investment decisions based on actual figures, not on data found in sales brochures.

This article was originally published by Miha Možina, PhD, in one of his articles on LinkedIn:

– https://www.linkedin.com/pulse/experiment-human-vs-machine-miha-mozina-phd/